How to Scrape Dynamic E-Commerce Product Pages in Python Using BeautifulSoup and Selenium?

This blog shows how you can escape dynamic e-commerce product pages in Python using BeautifulSoup and Selenium and how 3i Data Scraping can help you.

Our achievements in the field of business digital transformation.

Web Scraping in Python using BeautifulSoup and Selenium

There are a lot of Python libraries you can utilize for data scraping as well as many online tutorials are available on how to start.

Today, we will discuss scraping e-commerce product data from dynamic pages and concentrate on how you could do it with BeautifulSoup and Selenium.

Usually, eCommerce product list pages are dynamic, producing various product details for users. For example, airline price change depending on users’ locations or products ranked by significance based on perusing behavior. The product information is generally populated using Javascript in-browser. That is where Selenium has a role to play. It could programmatically load and interact with the web pages within a browser. Then, we can use BeautifulSoup to parse the page resource and scrape required product data from the HTML elements.

This blog will show how to recover product data from pages like these automatically.

…for a clean and useable format for use and analysis.

Why do this? Knowing your competitors, price comparisons across different retailers, and analyzing the market trends are only some practical applications.

Want to Scrape Dynamic eCommerce Product Pages!

Installation

This blog will utilize Pandas, BeautifulSoup, and Selenium. Non-compulsory for more superior progressions include Re, Requests, as well as Time. In case you don’t have all the things installed, the best way is installation through pip.

pip install selenium

pip install beautifulsoup4

pip install requests

We will need to install the web driver. For instance, for Chrome, you need to download the ChromeDriver. Position the executable file in among the directories within PATH variable.

Page Scraping

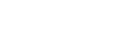

For demo, we will scrape books.toscrape.com, a fiction book store. Its pages are not dynamic, or static, however, its functionality might be similar.

import pandas as pd

from selenium import webdriver

from bs4 import BeautifulSoup

import re

import requests

import time

url = 'https://books.toscrape.com/catalogue/page-1.html'

driver = webdriver.Chrome()

driver.implicitly_wait(30)

driver.get(url)

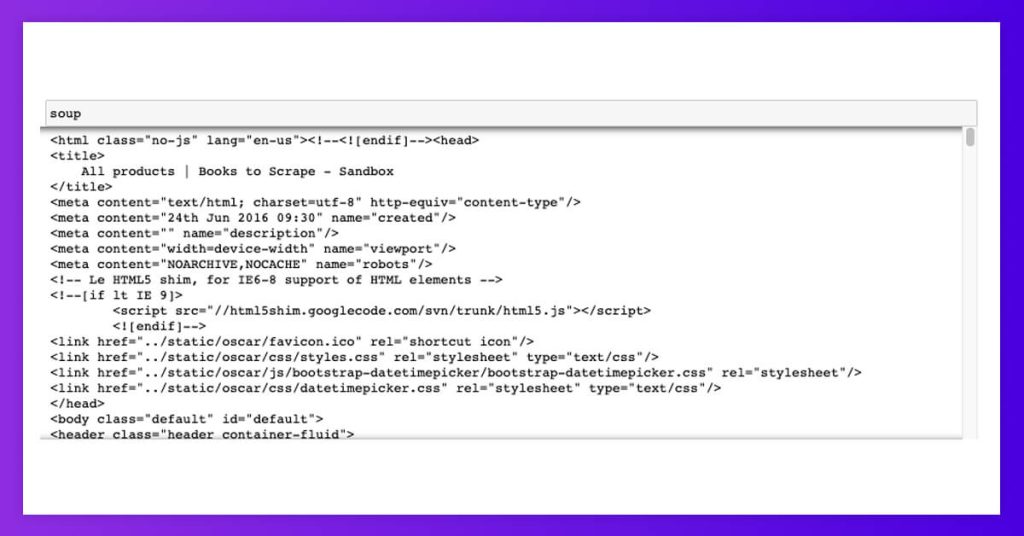

soup = BeautifulSoup(driver.page_source,'lxml')

driver.quit()

The beyond might load the URL within a Chrome browser as well as wait for elements to load, pass the page resources to BeautifulSoup as welll as end a browser session. For the pages, which take long time for loading, you might need to mess around with waiting time (in seconds).

Our soup looks like this. It’s time to start scraping useful elements!

Scraping Elements

To get an element, we could filter through its tag or attribute name and value.

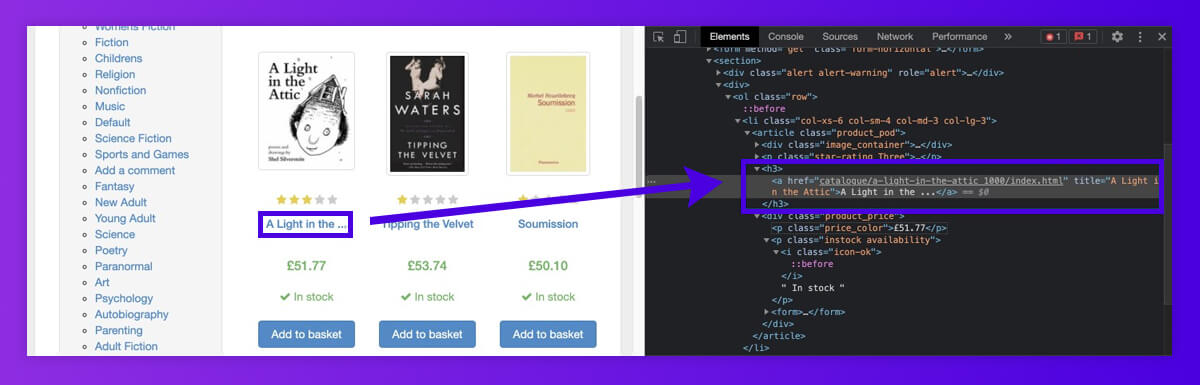

For scraping all product names on the initial page of the fictional bookstore, let’s recognize which elements they got stored in. This looks like the text is reliably stored in <h3> tag.

Soup.find() get the initial element that matches our filter: the tag name matches ‘h3’.

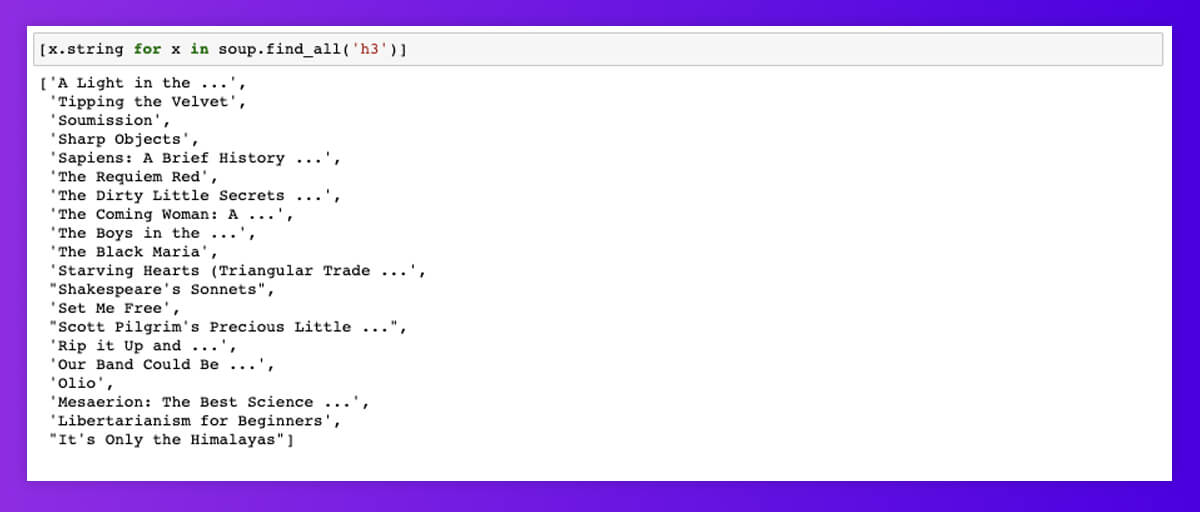

Adding .string returns the element texts only.

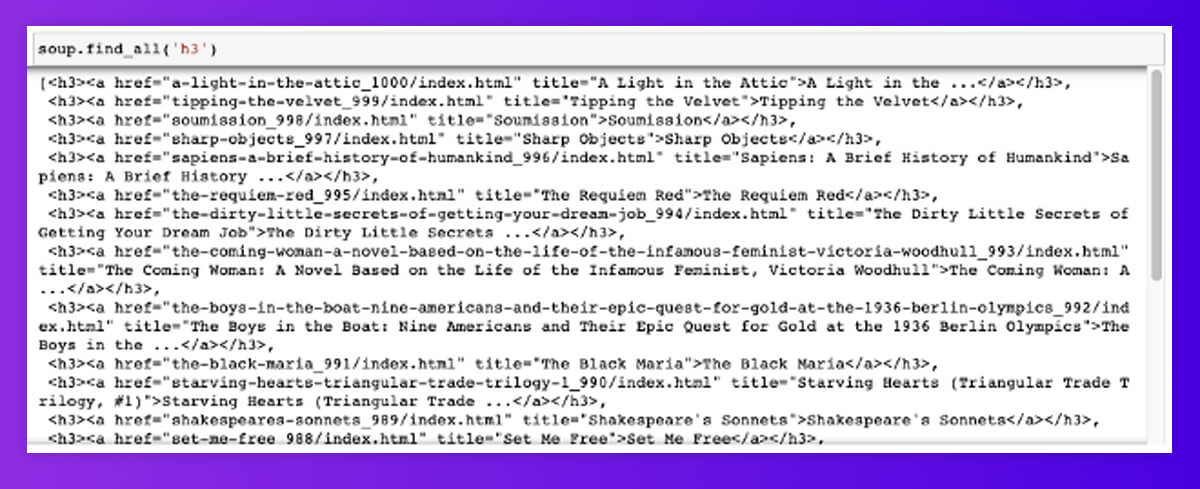

Soup.find_all() gets all the elements that match our filter and returns them within the list. Note: soup.find_all() and soup() would function similarly in case you’re a brevity fan.

We are finally looping through. String in the list comprehension returns the elements’ texts. Now, we have got the list of 20 products’ names!

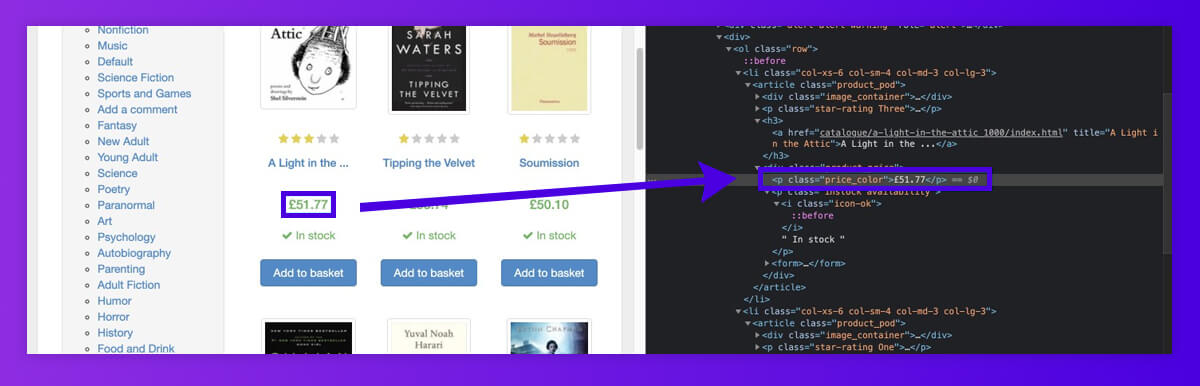

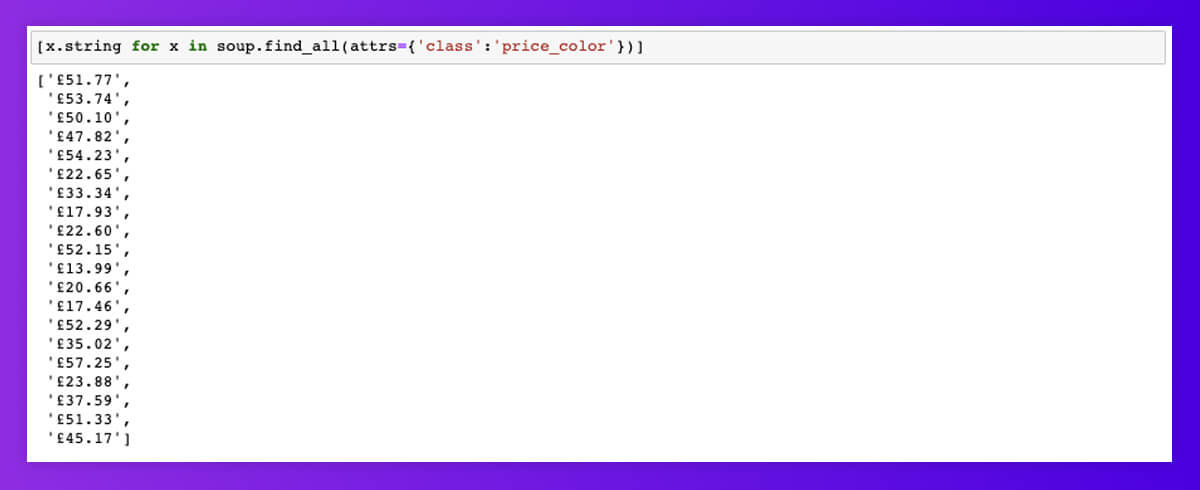

A similar can be made with all the product details. To find all product prices, we have filtered through attribute names called ‘class’ and attribute values called ‘price_color.’

You can also stop here as wella s focus on lists of various product details, and it might work very well for websites with clean HTML. However, e-commerce websites are not always explicit, and troubleshooting for the exceptions could be the most time-consuming part of the procedure.

Missing Elements

It is the most general exception we have encountered.

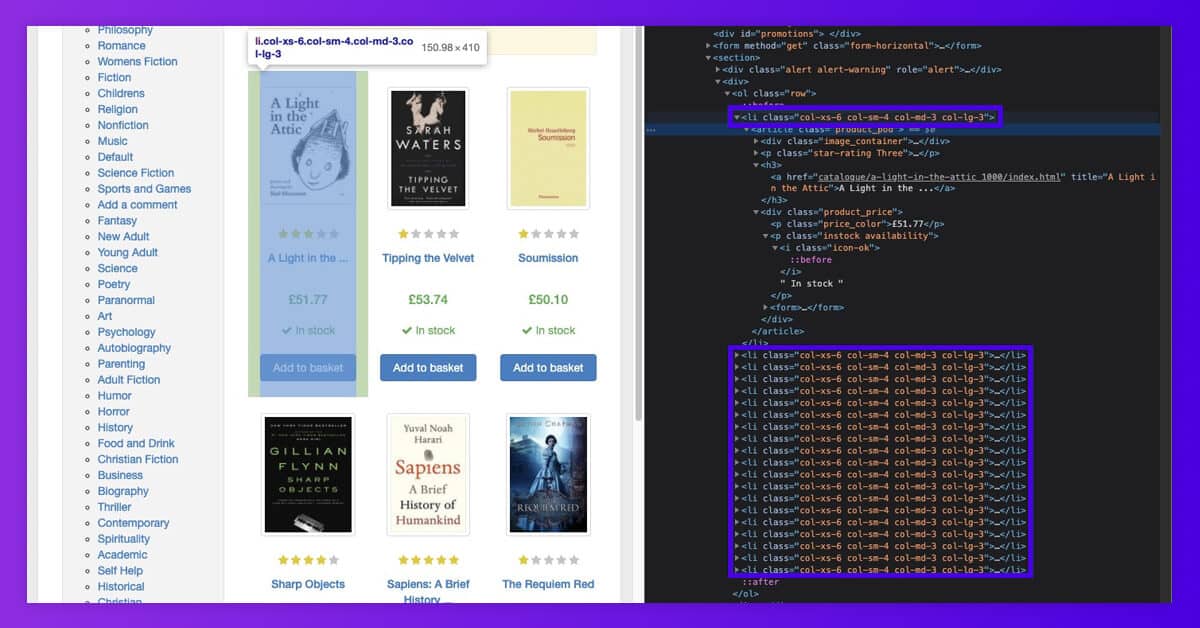

What occurs when elements are lost for specific products? For instance, if any product is provisionally unavailable and there are no tags having prices for the product. Rather than having null values in a list, we might get the price list, which is shorter than a list of different product names, and risk getting incorrect pricing against the products.

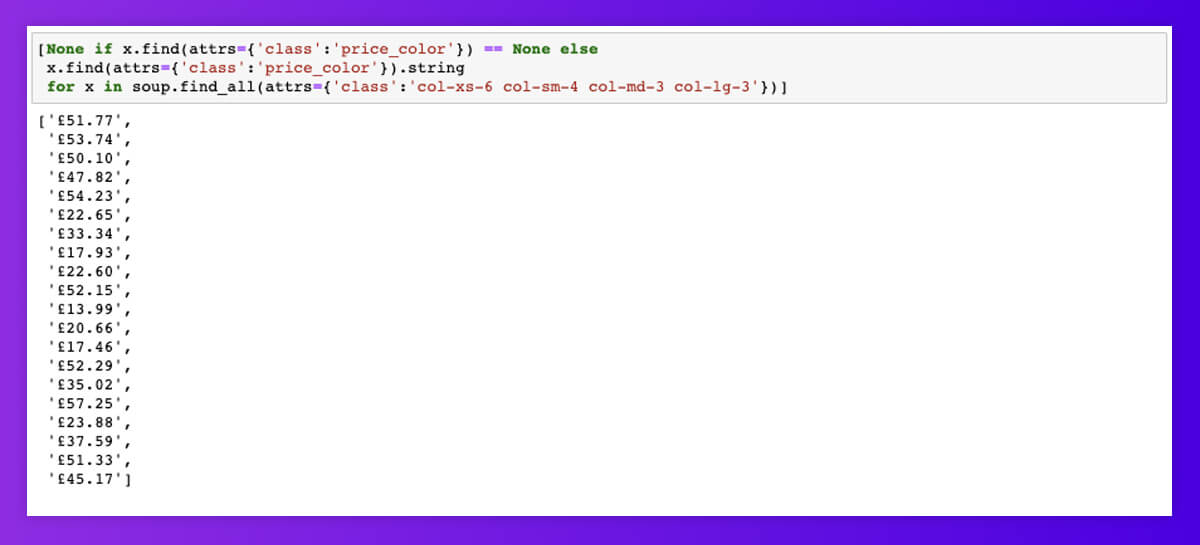

To avoid that, we found it best to first filter to the outer elements, which contain all the product data, then, within every outer element, get particular inner elements like the product’s name, pricing, etc. We could include the condition for returning the null value if the inner elements are missing from the product tiles. It will ensure all the product data is in the same order within our lists.

[Return null value if inner element is missing else

return text of inner element

for x in all outer elements]

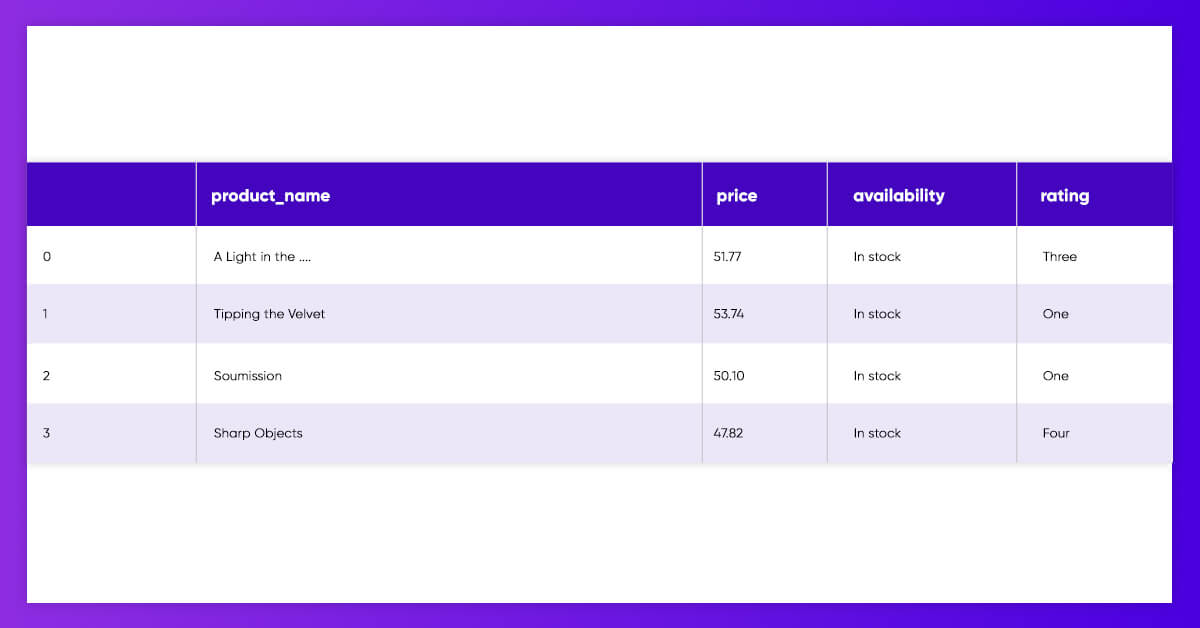

Put that all together in the Pandas DataFrame

df = pd.DataFrame(list(zip([None if x == None else x.string for x in soup.find_all('h3')],

[None if x.find(attrs={'class':'price_color'}) == None else x.find(attrs={'class':'price_color'}).string.replace('£','') for x in soup.find_all(attrs={'class':'col-xs-6 col-sm-4 col-md-3 col-lg-3'})],

[None if x.find(attrs={'class':'instock availability'}).text == None else x.find(attrs={'class':'instock availability'}).text.strip() for x in soup.find_all(attrs={'class':'col-xs-6 col-sm-4 col-md-3 col-lg-3'})],

[None if x.find(attrs={'class':re.compile(r'star-rating$')}).get('class') == None else x.find(attrs={'class':re.compile(r'star-rating$')}).get('class')[1] for x in soup.find_all(attrs={'class':'col-xs-6 col-sm-4 col-md-3 col-lg-3'})])),

columns=['product_name','price','availability','rating'])

We may put the lists of various product data straight in the Pandas DataFrame and name every column.

For all ways you can navigate the elements, see BeautifulSoup documentation.

It is also a perfect time for preprocessing some features, including removing the currency symbols and the whitespaces around the text.

To get it easily done, we may put that in the function to scrape the page having a single line of code.

def scrape_page(url):

driver = webdriver.Chrome()

driver.implicitly_wait(30)

driver.get(url)

soup = BeautifulSoup(driver.page_source,'lxml')

driver.quit()

df = pd.DataFrame(list(zip([None if x == None else x.string for x in soup.find_all('h3')],

[None if x.find(attrs={'class':'price_color'}) == None else x.find(attrs={'class':'price_color'}).string.replace('£','') for x in soup.find_all(attrs={'class':'col-xs-6 col-sm-4 col-md-3 col-lg-3'})],

[None if x.find(attrs={'class':'instock availability'}).text == None else x.find(attrs={'class':'instock availability'}).text.strip() for x in soup.find_all(attrs={'class':'col-xs-6 col-sm-4 col-md-3 col-lg-3'})],

[None if x.find(attrs={'class':re.compile(r'star-rating$')}).get('class') == None else x.find(attrs={'class':re.compile(r'star-rating$')}).get('class')[1] for x in soup.find_all(attrs={'class':'col-xs-6 col-sm-4 col-md-3 col-lg-3'})])),

columns=['product_name','price','availability','rating'])

return df

scrape_page('https://books.toscrape.com/catalogue/page-1.html')

Pagination

For scraping products, which span across different pages, we could put that in the function, which iterates through every page’s url. This appends DataFrames from all the extracted pages.

def scrape_multiple_pages(url,pages):

#Input parameters of url and number of pages to scrape. Put {} in place of page number in url.

page_number = list(range(pages))

df = pd.DataFrame(columns=['product_name','price','availability','rating'])

for i in range(len(page_number)): #Loops through each page number in url.

if requests.get(url.format(i+1)).status_code == 200: #If the url returns an OK 200 reponse, scrape the page.

df_page = scrape_page(url.format(i+1))

df = df.append(df_page)

time.sleep(5) #Wait 5 seconds.

else:

break

return df

scrape_multiple_pages('https://books.toscrape.com/catalogue/page-{}.html',pages=2)

In this URL parameter, we dynamically populate page numbers using {} and .format(). The page parameters define the maximum number of pages for scraping, beginning at 1.

We have also added extra steps to run if the URL returns the OK 200 reply and sleeps for merely 5 seconds between the pages.

Conclusion

Here are some things to think about:

It’s essential to consider how much you extract as extra server calls could easily add.

It would be best if you considered that as we require to load and run Javascript for pages, this technique is slower for all programmatic standards and is not much scaleable.

With any extracting, element scraping needs to get tailored for all sites as HTML structures would differ across websites.

However, scraping some pages at one time is a straightforward and helpful solution, which only utilizes some code lines.

For more information, contact 3i Data Scraping or ask for a free quote!

What Will We Do Next?

- Our representative will contact you within 24 hours.

- We will collect all the necessary requirements from you.

- The team of analysts and developers will prepare estimation.

- We keep confidentiality with all our clients by signing NDA.